Naming the Machine

Editor’s Note: This essay emerged from conversations at Helena’s 2025 Summit in Valle de Bravo, Mexico — a gathering of leaders from technology, policy, science, and the arts convened to collaborate on a subset of pressing societal challenges. It is not a summary of the Summit or a consensus statement; it reflects the author’s own exploration of ideas shaped by those discussions.

“You can’t [change] paradigms through instructions or incentives or data flows or rules of the system. You need to learn to see the world differently, to generate different mental models, to populate your mind with different ideas. You do that through exposure to patterns, stories, numbers, and words that repeatedly hit the heart and the gut… One way is to keep pointing at the anomalies and failures of the old paradigm. Another is to keep speaking louder and louder – even joyfully, with assurance – from the new one.” –Donella Meadows, “Leverage Points: Places to Intervene in a System” (1997)

In her landmark 1997 essay on the architecture of structural intervention, Meadows identified paradigm change among the highest-order leverage points in any complex system. This paper offers a preliminary interrogation of the system currently being erected through the convergence of artificial intelligence, social media, and enterprise technology into an infrastructure of unprecedented reach with minimal accountability. Humanity is now beset by a limbic-colonizing social media, exacerbated by the rise of “digital Leviathans” – dominance seeking companies – allied with governments. In pursuit of dominance or avoidance of being dominated, these forces have set for themselves the task of attaining Artificial General Intelligence (AGI). In their lurch toward this fetish, they risk, and seem willing to risk, the rise of neuratrophies, the destruction of the social order, the undermining of democratic root systems, and worse: the replacement or extinction of humankind.

If Meadows is right that paradigms shift through stories, patterns, and language reaching into the heart and gut, then I propose three points of narrative reorientation for the shift we require.

Point I: The Danger Is Not Ahead of Us

In hopes of frustrating or preventing human conscription into a “Stepford” reality (as Bill Joy described in his 2000 Wired magazine article: “Why the Future Doesn’t Need Us”), we seem to be awaiting AGI, superintelligence, or quantum computing as the moment when the threat becomes real.

But this is folly.

The cascading deployment of social media, infused now with AI, is a distortive life-field that dislodges us from natural temporalities, accelerates dissociation of the cultural and spiritual scaffolding our social world, then in the absence of these stabilizing factors, pits us against each other unrestrained, and excavating human and humane interiority, undermines our social modes. This is happening now, without AGI, without superintelligence, and in spite of Large Language Model limitations.

One tires of warning that social media and enterprise technologies need not be sentient to enact their often inscrutabledamage. Social media is fairly stupid and predictably self-cannibalizing through its bot armies, which undermine its own demographic market claims, yet it has managed to corrupt nearly two generations of Western minds, and centered rage in the public square, whilst fueling public anxiety as never before. It does this whilst aggregating datasets on humanity that no known entity has ever possessed – a process uncontemplated in our legal traditions or religious systems, operating at the speed of light, beyond the adaptive capacities of our social or psychological modes.

In practical terms, labor is being disintermediated from productivity and capital. To wit, Salesforce has replaced 4,000 customer service workers with its own AI agents – its CEO boasting he “needed less heads” – while PwC shed thousands of positions in the very year it invested $1.5 billion in AI capabilities. Across the technology sector, nearly 37,000 jobs were cut in the first quarter of 2026 alone. As “dumb” as today’s technology is, still, it can aggregate biometric data, generate digital gulags, cultivate a surveillance canopy, undermine educational assessments, and corrupt the last eddies of democracy, all without achieving superintelligence.

Awaiting AGI as the coming or imminent danger has deceived us into thinking the threat is ahead of us, when in fact we are living through its effects in this moment.

Point II: The Paradigm is Contingent on Language

The menace is upon us, (though not at full strength), and whilst technical and regulatory responses may take years, there is something each of us can and should do now: we must refuse the language that has already begun to cede human standing to machines. Too often, even in the most benign ways, we speak of these technologies in equivalence with human beings: we say the AI thinks, the model understands, the system decided, thus performing a soft ontological promotion. Each such concession, however small, redraws the boundary between tool and agent in the wrong direction. To counteract this erosion, I propose we adopt an agential hygiene: the disciplined insistence that language must always trace accountability back to living decision-makers.

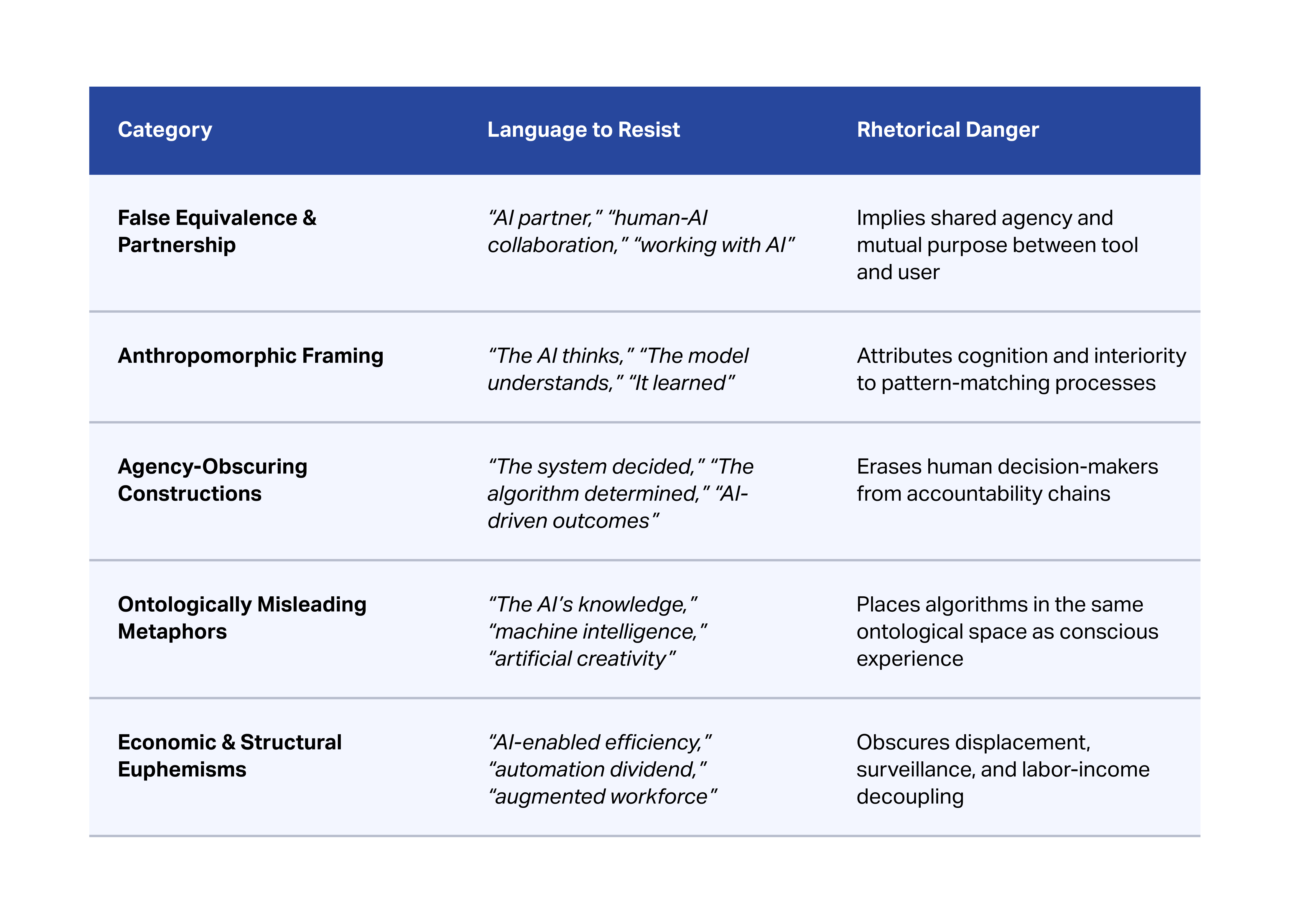

The following terms and phrases, categorized here by the rhetorical danger they pose, must be resisted:

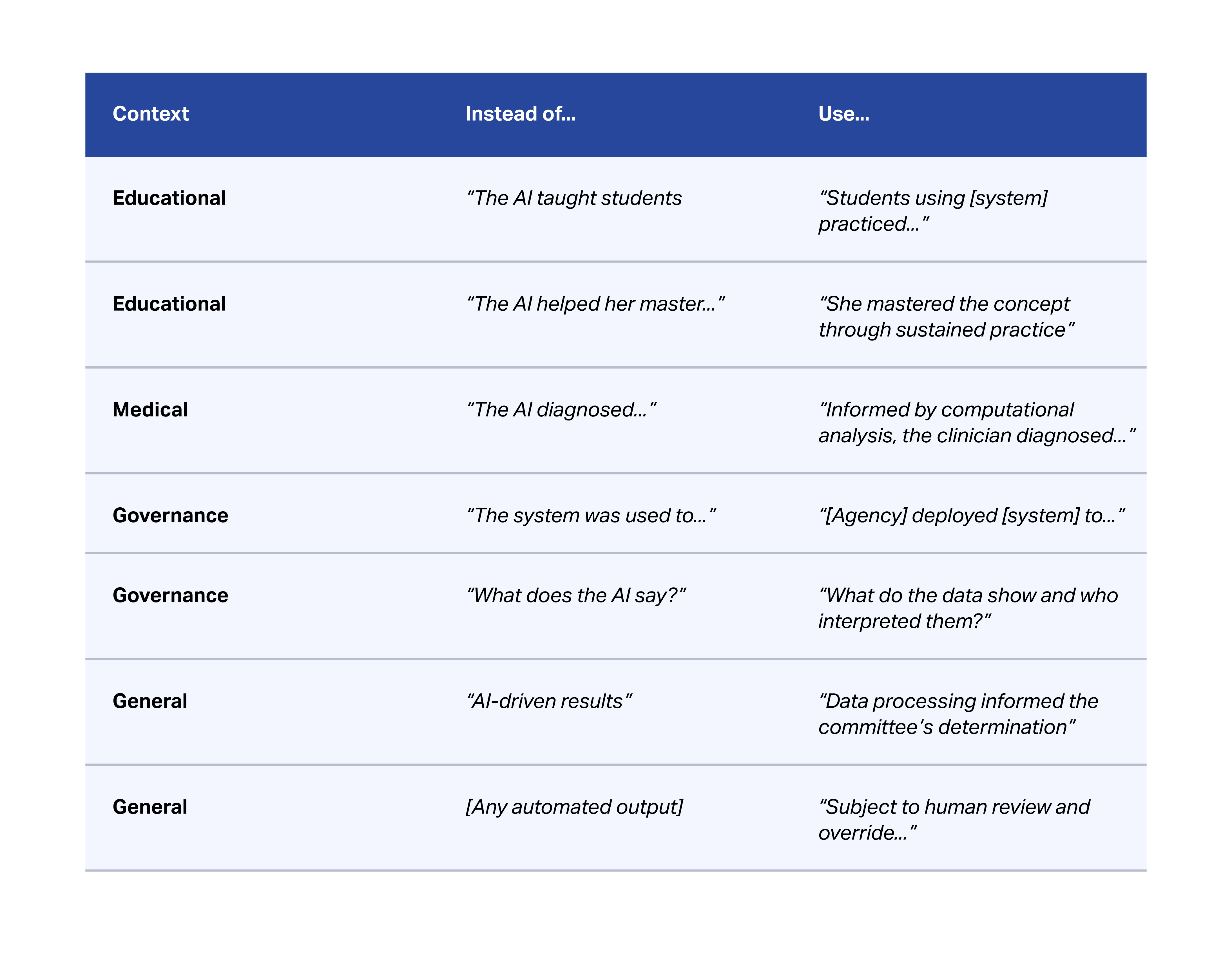

The chart below enumerates functional alternatives that preserve human agency:

The examples above represent a small subset of the ways in which authority and agency are conscripted by the predominant rhetoric of the techno-industrial complex. The nodes of conscription are myriad. Taken together, they do not describe a worldview, they construct one, until we find ourselves enclosed within these citadels of language: structures of our own making that wall us off from the life world.

We must resist the euphemistic life cycle by which a technical term (“neural network”) drifts into metaphor (“the network learned”), hardens into reification (“the AI’s knowledge”), and arrives at false agency (“the AI concluded”), becoming resolution.

To arrest this drift, a set of core syntactic commitments must govern how we speak and write about these technologies and the processes into which we embed them:

Always:

- ●Name the human agent

- ●Attribute uncertainty to human judgment

- ●Trace error to human deployment

- ●Center the learner, and attribute learning to the learner

- ●Position technology as environment, never as actor

- ●Preserve accountability chains from decision-maker to outcome

- ●Separate data processing from deliberation

- ●Mark every automated output as contingent, subject to human review and override

Institutionally, this demands cross-domain enforcement: mandating compliant terminology in publications, grants, and press releases; requiring vendors to describe products in functional, non-anthropomorphic terms; training staff to recognize and correct “creeping personifications” whilst cultivating metalinguistic vigilance, (the habit of self-correction when one catches oneself saying “the AI thinks”).

In our pursuit of the technologically ambitious, we must be linguistically austere, ensuring that the words we use preserve a core truth: tools serve.

They do not share; they function.

They extend human capacity, as they do not possess capacity of their own.

Technology may be maximally deployed but minimally credited. Invariably, it should be referenced as a sophisticated instrument; never a partner, never a peer, never possessing standing in the community of moral consideration.

The resistance to techno-susceptibility is not Luddite; it is hierarchical. Technology serves diagnostic and coordination functions for living beings, who remain the sole locus of value and the sole bearers of situational grace and responsibility. What we resist is not technology but categorization confusions that hide the leverage points, which induce paradigm shifts – metaphysical errors that, often by stealth, place pattern-matching algorithms in the same ontological and moral space as sentient, suffering, striving living beings.

Point III: The Arithmetic of Power and the Logic of Exclusion

On February 20th, 2026, Sam Altman of OpenAI gave an interview to the Indian Express in which he said: “People talk about how much energy it takes to train an AI model… But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

If you are asking why we must be vigilant in our language as demanded at Point II, take note: we are in a narrative war in which human beings are being compared to automata and defined by an investment-utility equation. A competitive nexus is being fashioned in which human beings and human life are being rendered commensurable with the things humans create.

But even on these grounds, Altman is wrong. Consider the arithmetic: humans consume roughly 2,000 calories per day. Over twenty years, that amounts to approximately 17,000 kilowatt-hours of total food energy. Training GPT-4 consumed an estimated 50 gigawatt-hours of electricity, the equivalent of 3,000 human lifetimes of “training energy” for a single model run. Altman’s comparison collapses on its own terms. Yet its attraction persists within a sector that monetizes its users and repeatedly construes human life in the idiom of optimization and return

Put simply: there is not enough energy on earth to power the systems being curated by the digital Leviathans at scale. Should we then breathe sighs of relief that the limit boundaries of power generation have saved humanity?

No.

History is replete with examples of what happens when resource scarcity constrains power: those who seek dominance do not moderate their aims. They narrow the circle of who benefits. They invent “in” and “out” groups. They stratify, exclude, and cull.

As I argued in recent lectures for the AI Governance Caravan (2025), in association with the Beijing Institute of Technology (BIT), universal connectivity is thermodynamically unviable. And the constraints compound, for even where energy might suffice, the decoupling of labor from income would engender social and economic chaos on a scale never before seen. Continuous digital immersion severs humans from circadian and seasonal rhythms essential to biological regulation, whilst degrading cognitive capacities, rendering people not merely unproductive, but almost childlike and nearly completely socially and politically malleable.

Yet even to enumerate these harms is to remain on Altman’s terms; arguing about efficiency, capacity, and output. The deepest objection to his comparison is not mathematical, its moral. A child’s twenty years of growth – of suffering, curiosity, love, failure, wonder – are not an investment cycle, awaiting a return. Human value is not to be cashiered,; a life is not optimizable as a training run and does not require justification by output.

Conclusion

If we are to shift the current paradigm, we must become aware of its stages of human decentering. We must enter a program of narrative discipline, recognizing that the operational demands of the Leviathans, now a quotidian hegemon, do not represent limits of their power, but rather a reordering of who that power is willing to include. Facing this reordering, we are called upon to defend what cannot be computed: that human life is of ultimate value; dignity is not output and that no measure of technological ambition absolves us of the obligation to ask whom it serves and at once, discards. If all we imagined for the future, we experienced at Helena’s Summit in Valle de Bravo, Mexico – a human commonwealth in nature – we are now compelled to radiate it outward with the urgency of those who understand that what diminishes any life diminishes the possibility of life itself.

“Do not send to know for whom the bell tolls; it tolls for thee.”

About the Author:

Ambassador Professor Gilbert Morris is Bahamas National Public Reader and Vice Chancellor, Bahamas Alrae Ramsey Institute for Foreign Affairs (BARIFA). He was Professor at George Mason University, where he taught in four faculties. A leading thinker on global finance, he was advisor to Swiss Private Bankers Association, and completed a landmark study on China-Caribbean Sea Trade for China’s Vice Premier. Morris also served as Economic Advisor, Ministry of Finance, and was Special Envoy, House of Lords All Party Committee, U.K., from The Office of the Premier of Turks & Caicos Islands.

Most recently Morris headlined the “AI Governance Caravan” at Beijing Institute of Technology, China. His current research focuses on cognitive neuropsychological impacts of scaled technologies. He is an author of the NYT bestseller Rescue America.His forthcoming book Friston’s Ontology (2027) advances a decades-long philosophical project rooted in neuroscience and ontological ethics. In July 2026, he will release a landmark peer review of Sebastian Mallaby’s intellectual biography of DeepMind’s Demiss Hassabis, The Infinity Machine, for the University Bookman.